How Patterns and TBDs Make AI-Generated Requirements Better (Part 3/5)

In Part 2, I argued that we should not use an LLM for every requirement-writing rule. Programmatic checks handle a lot, NLP helps with structure, and the LLM earns its place on the genuinely semantic parts.

But once that hybrid checking workflow is in place, a different problem shows up: Detecting violations is not the same as producing a good requirement.

A requirement can pass all the rules and still be weak. It can be inconsistent with the rest of the project, leave key values unstated, or sound polished while hiding missing information. That is where patterns and TBDs become important.

Why rule checking alone is not enough

Suppose a stakeholder says: "The robot should pick small components accurately in the required workspace."

Rule checking tells us a lot. We can flag "accurately" and "required" as vague. We can suggest measurable wording. We can even produce a cleaner-sounding sentence.

But we still have a deeper problem: What kind of requirement is this supposed to become?

Is it functional? A performance requirement? Should it follow a basic "shall" form, an event-driven "When ... shall ..." structure, or something more specific?

And if the workspace radius, component size, or accuracy threshold are not known yet, should the system invent them?

That is exactly where free rewriting becomes risky. Without structure, the LLM may produce something that sounds more formal but is not actually better engineering.

Patterns give the requirement a shape. TBDs(To Be Determined) keep the system honest when important values are still unknown.

Requirement statement patterns

When I say patterns here, I do not mean prompt patterns. I mean requirement statement patterns, i.e., agreed sentence structures that help express requirements consistently.

The pattern catalog in the current implementation is grounded in the INCOSE Guide to Writing Requirements. It includes:

- basic subject-verb-object forms

- measurable outcome patterns

- conditional forms: `When`, `While`, `If/then`, `Where`

- ISO/IEC/IEEE 29148-style conditional structures

- type-specific patterns for functional, performance, environmental, and design constraint requirements

This matters because different kinds of requirements should not all be rewritten into the same generic "The system shall ..." sentence.

An event-driven behavior belongs in a `When ... the system shall ...` structure. A state-dependent requirement works better with `While ...`.

A design constraint should not look like a performance requirement. Patterns are not just formatting. They encode engineering intent into the shape of the sentence.

How patterns and TBDs make requirements more useful

Why patterns improve rewriting

Three reasons.

First, consistency. If requirements describing similar situations are written in very different forms, the set becomes harder to review, trace, and verify.

Second, structure forces specificity. A pattern requires the writer or the LLM to fill specific roles: entity, action, condition, measurable outcome, trigger, constraint. That is much better than asking a model to "make this sound formal."

Third, patterns make gaps visible. If a pattern expects a measurable outcome and none is available, that absence becomes explicit instead of being hidden behind vague wording.

That last point connects directly to TBDs.

TBDs are not just placeholders

In many engineering documents, TBD is treated as something to avoid. In practice, unresolved values are part of real projects.

The bad way to handle that is to hide the uncertainty, either by inventing a value or by leaving the sentence vague.

The better way is to represent it explicitly.

In the current implementation, TBDs are structured project objects. They carry a type (integer, float, boolean, enum, range), a unit, a description, an owner role, a milestone, and a status. They are not blank slots. They are backlog items with engineering metadata.

That makes a big difference. Instead of rewriting a vague requirement by inventing a number, the system can say: "This requirement needs a value here. That value is not known yet. It stays open." That is far more useful than hallucinated precision.

Approved values vs unresolved values

One of the most useful design choices in the project is that not all missing data is treated the same way.

Some project facts are already known and approved. For the SCARA robot case, the catalog already contains approved values for controller type, motor type, degrees of freedom, rod dimensions, kinematics support, and other grounded facts.

Other values are genuinely unknown and must remain unresolved until the team approves them.

The workflow distinguishes between the two. It prevents the system from re-asking for things the project already knows. And it prevents the LLM from inventing values for things the project has not decided yet.

How pattern selection works in practice

Pattern selection does not have to be manual or fully model-driven.

In the current workflow, user requirements are embedded and scored against the pattern catalog using vector similarity. The system retrieves a small set of candidate patterns ranked by how well they match the intent of the requirement.

This matters because pattern selection is rarely a purely linguistic problem. Multiple patterns are often plausible, and a human may still want to choose. The workflow supports that: patterns can be selected manually when needed.

The goal is not to pick the one true pattern automatically. It is to narrow the space and make the next step better guided.

If you want to see how these requirement patterns actually look, I have collected around 14 INCOSE-inspired patterns in this GitHub repo…

How patterns and TBDs work together

The real value appears when both are used together.

Patterns provide the structure. TBDs fill the unresolved slots without pretending the project already knows the answer.

The LLM is no longer rewriting in a vacuum. It gets guidance about what kind of requirement is intended, which project values are approved and reusable, and which values must stay open.

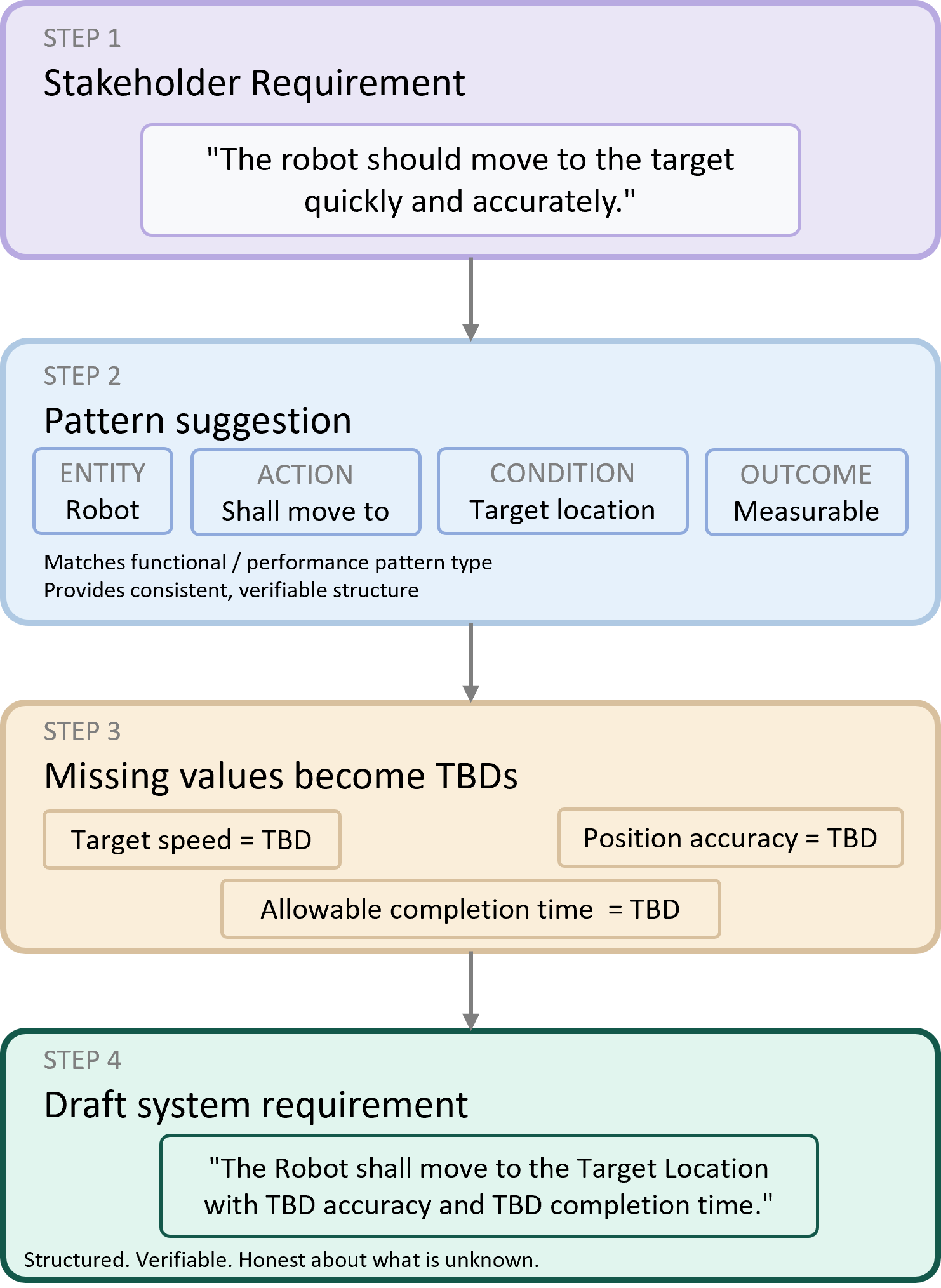

Here is a concrete example. Imagine this input: "The robot should move to the target quickly and accurately."

A naive approach: ask the LLM to rewrite this into something nicer.

A better approach:

1. Recognize that this likely needs a functional/performance pattern

2. Identify that "quickly" and "accurately" imply missing measurable thresholds

3. Reuse any already approved project values that are relevant

4. Create explicit TBD items for the missing thresholds if they do not already exist

5. Generate a draft requirement aligned with the selected pattern

The result gives the team something they can review and improve, not something they have to distrust.

Avoiding duplicate TBDs

One subtle problem with TBD-heavy workflows is duplication.

Different people, or different model outputs, may refer to the same unresolved concept in slightly different words. One draft might say "cycle time", another "task duration", a third "motion completion time".

If every draft creates a fresh placeholder, the backlog becomes chaotic.

That is why the implementation tries to canonicalize TBDs and reuse similar approved parameters when they already exist. The system is not just creating placeholders; it is building a reusable parameter memory for the project.

Without this, TBD handling quickly becomes just another source of inconsistency.

Why this improves the LLM output

From a prompt-engineering perspective, patterns and TBDs do something very useful: they reduce the freedom of the rewrite without making it brittle.

The LLM still has room to produce readable text. But it has much better guidance about:

- the intended requirement structure

- approved project terminology

- relevant states, triggers, glossary terms, acronyms, and units

- which values are known

- which values must remain unresolved

That is a much healthier setup than simply telling the model to "rewrite this requirement professionally."

Patterns improve consistency. TBDs improve honesty. Both matter.

Where the human still matters

None of this removes the human from the process.

Someone still has to decide whether the suggested pattern matches the actual engineering intent. Someone still has to approve TBD values. Someone still has to judge whether a design constraint is justified.

But that is exactly the point. The workflow is not trying to replace engineering judgment. It is trying to make the draft artifacts more structured, more reviewable, and more reusable before that judgment is applied.

What comes next

By this point, the workflow is doing more than rule checking.

It is checking requirements against INCOSE-based writing rules, selecting appropriate patterns, identifying missing values as structured TBDs, reusing approved project knowledge, and guiding the LLM toward better drafts.

The interesting question now is:

What does the full end-to-end workflow look like when rules, patterns, TBDs, project context, and human review all work together?

That is what I will cover in Part 4.

A quick question for you:

When key requirement values are still unknown, how does your team handle them today?

Do you leave them vague, use placeholders like TBDs, or avoid drafting the requirement until everything is known?

I'd especially love to hear from anyone who is looking to integrate AI-assisted workflows with requirements management tools like DOORS, Polarion ALM, or Jira. Feel free to drop your thoughts below:

A quick note: I used Claude to help with the flow and structure of this post. But every idea here is mine, and I read (and meant) every word.